AI has been integrated into almost every stage of hiring, but adoption and impact are two very different things. Despite high adoption rates of AI in talent in 2026, only 5% of organizations rate their Talent Acquisition (TA) function as world-class. This implies that even high AI adoption hasn’t propelled TA operational maturity. But somewhere between the excitement and the execution, a harder truth emerged: most organizations still can't point to measurable ROI from what they've deployed.

2026 is when reckoning gets serious. TA leaders are beginning to change the question itself. It's not so much about whether or not AI should be used, but rather which parts are actually working.

The following trends reflect exactly that.

Some of these shifts are already inside the Applicant Tracking System (ATS) used within companies. Others are beginning to reshape how entire TA functions are structured. All of them are worth understanding before your next technology decision.

Agentic AI And Autonomous Workflows Become The New Operating Layer

Recruiting used to be highly manual: source, outreach, schedule, follow up, repeat. Some AI tools still operate in that mode. Prompt goes in, response comes out, end of loop. Agentic AI breaks that loop a bit. Be it a thinning pipeline, a stalled role, or missing outreach volume, it can pick that up and start acting without being told step by step. Less about assistance at that point, more about parts of the workflow quietly running on their own.

Category | Generative AI | Agentic AI |

Core behavior | Responds when prompted | Acts without prompting |

Example | Writes a job description | Identifies a pipeline gap and starts sourcing |

Output | Content | Completed workflows |

Human role | Reviews output | Sets guardrails and reviews decisions |

In practice, agentic AI handles the full hiring sequence: sourcing, outreach, screening, scheduling, and follow-ups. Gemini or ChatGPT or other generative tools often run alongside. It writes job descriptions, personalizes candidate messages, and, when integrated with interview intelligence platforms, it turns a 45-minute interview into a structured summary. Work that used to fill a recruiter's week now runs in a short time.

The result? Work that used to fill a recruiter’s week now gets completed in a shorter period, and companies moving in this direction can expect improvements in hiring speed as well. But technology is the easy part. The harder question — when do you trust the system, and when do you step in? — is now genuinely the job. Human oversight remains essential, particularly in screening, shortlisting, and final selection, where bias risks and compliance obligations are highest. The teams figuring that out first are pulling ahead fast.

Skills-Based Hiring Replaces The Resume As The Primary Filter

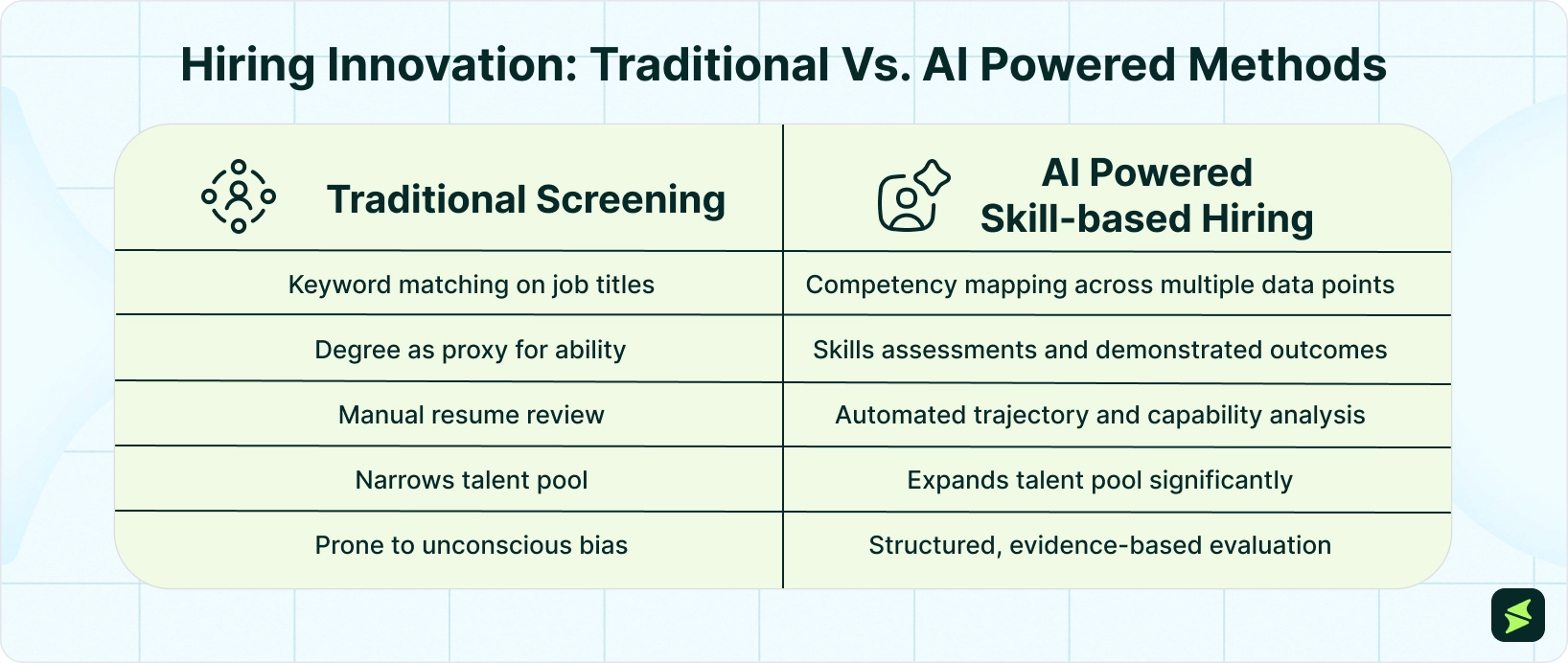

For decades, the resume was the starting point. A degree from the right school, a title that matched the job description — that was the shortlist logic. It was also, quietly, one of the most reliable ways to miss great talent.

AI is dismantling that filter. Modern hiring systems now evaluate candidates on demonstrated competencies (work samples, assessment outcomes, career progression patterns), not just where they worked or what their diploma says. A candidate who built the skill through a different path gets the same shot as one who followed the traditional route.

The business case is clear, too. LinkedIn’s 2025 Future of Recruiting report shows that companies running skills-based searches are 12% more likely to make a quality hire. And with employers now using skills assessments, it's quickly becoming the expected standard, too.

The shift, put simply, is from "Have you done this?" to "Can you do this well?" and that's a fundamentally different foundation for a hiring process.

Predictive Talent Analytics Moves Hiring From Reactive To Strategic

Most hiring still starts the same way: a role opens, a requisition gets posted, and the search begins. It's reactive by design. And it costs more than most teams realize: lost time, rushed decisions, and roles sitting open longer than they should.

The good news? There's a clear maturity curve, and most organizations are only at the beginning of it.

Analytics Maturity Model | ||

Stage | What It Does | Real Output |

Descriptive | Reports what happened | “We filled 45 roles last quarter” |

Diagnostic | Explains why | “Drop-off spiked at phone screen” |

Predictive | Forecasts what’s coming | “We’ll need 12 engineers by Q3” |

Prescriptive | Recommends action | “Start sourcing passive candidates in January” |

Based on industry benchmarks, most TA teams operate at Stage 1 or 2. The performance gap emerges at Stage 3, where hiring shifts from reactive reporting to predictive planning.

Moving from descriptive to predictive changes the starting point entirely. Instead of waiting for jobs to open, leading teams use past data, attrition signals, and real-time workforce insights to predict hiring needs and fix gaps before they become urgent.

In practice: a system flags that an engineering team is likely to shrink in Q2, starts building a candidate pipeline in Q1, and has a shortlist ready before anyone's even posted the job.

That's not a futuristic scenario. It's happening now, and the pressure to get there is growing. Now, workers will need entirely new core skills within the next few years. The teams getting this right are making TA a function that plans alongside the business, not one that scrambles to keep up with it.

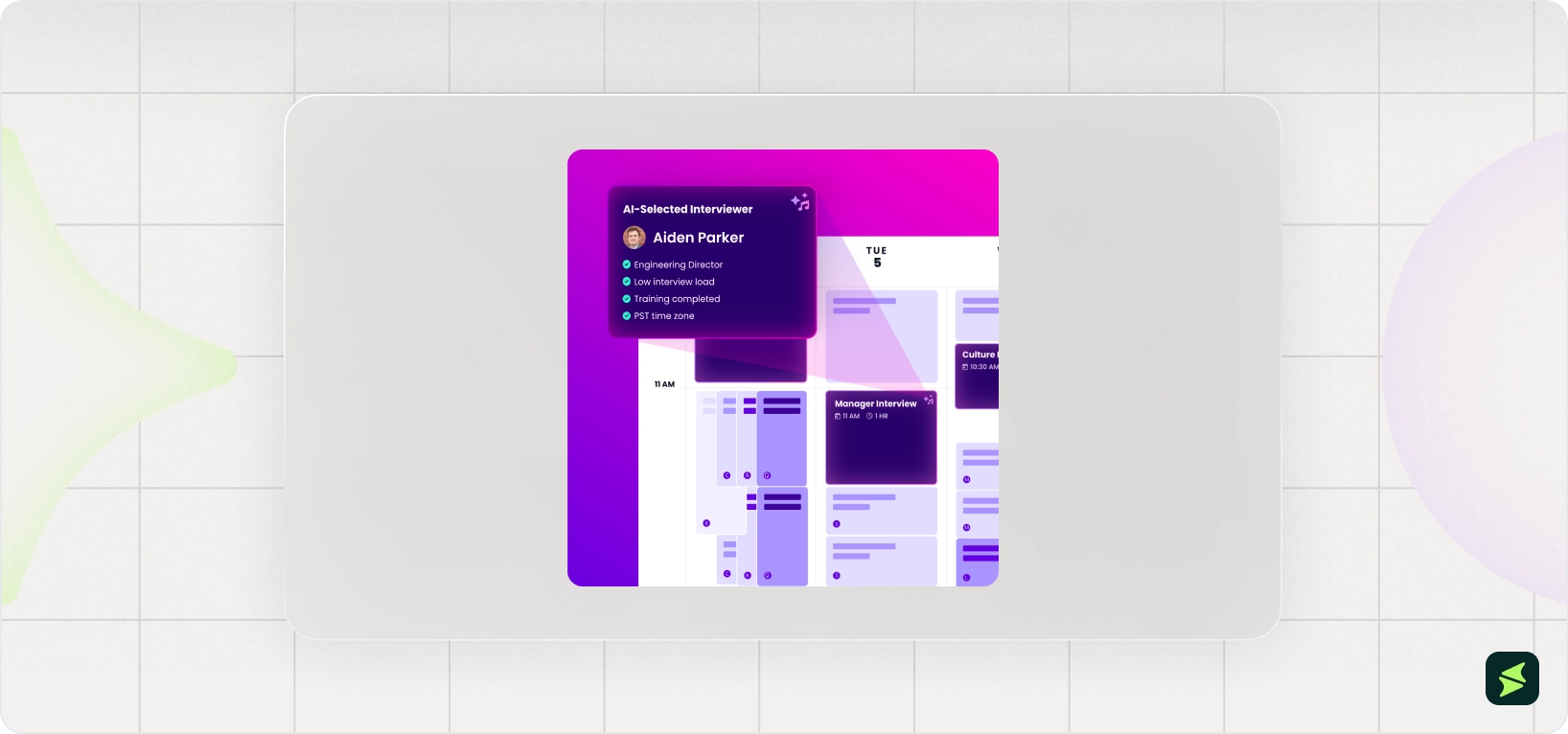

AI Interview Intelligence Builds Consistency Across Hiring Teams

Two interviewers walk out of the same interview with completely different impressions. One loved the candidate. One wasn't sure. Neither can fully explain why, and their notes don't help either.

This is the traditional interview problem. And AI is fixing it.

Modern interview tools operate across various layers:

- Transcription technology first captures and converts interviews into searchable text

- Evaluation AI then analyzes those transcripts to identify themes, competencies, and potential skill signals

- Decision-support tools present those insights in a structured format, giving interviewers a shared reference point instead of relying on scattered notes or memory

Candidates also benefit directly: rather than waiting days for a callback to schedule next steps, AI-powered self-scheduling puts the control in their hands.

The operational impact is real: Stanford Health Care's AI chatbot generated over 250,000 candidate interactions, 35,000 unique visits, and 11,000+ candidate leads while recruiter support tickets dropped from 50/week to one or two.

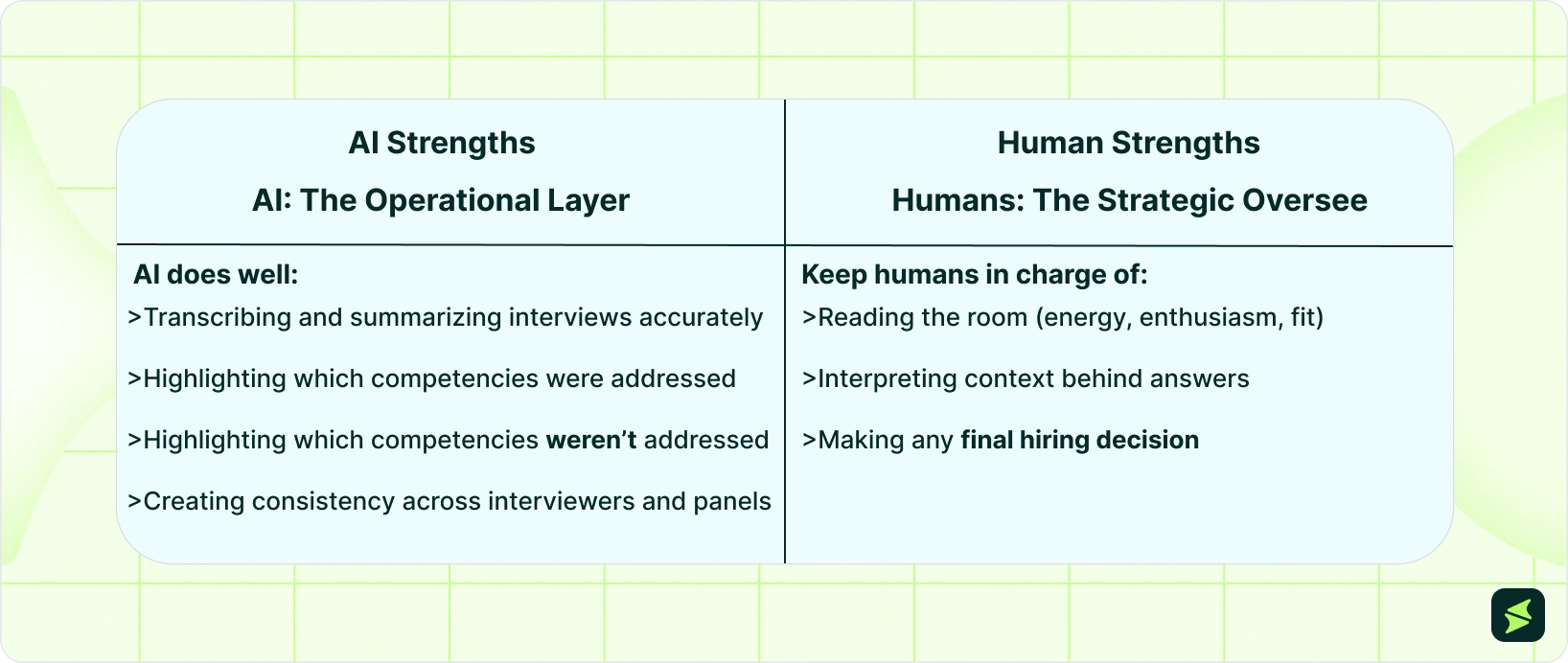

There is a nuance here, and it is worth drawing a line:

Note: Facial expression analysis and emotion detection still sit in a gray area in 2026, both legally and ethically, so most teams steer clear of them. What AI does better is consistency across evaluations. The final decision, though, still belongs with people.

Candidate Experience Gets Personalized At Scale

The best candidates have options, and increasingly they’re using technology to navigate those options more effectively. This is a shift explored in how AI and the job search are changing the way candidates gain an edge in hiring processes. If your hiring process feels slow, impersonal, or silent, they'll find one that doesn't.

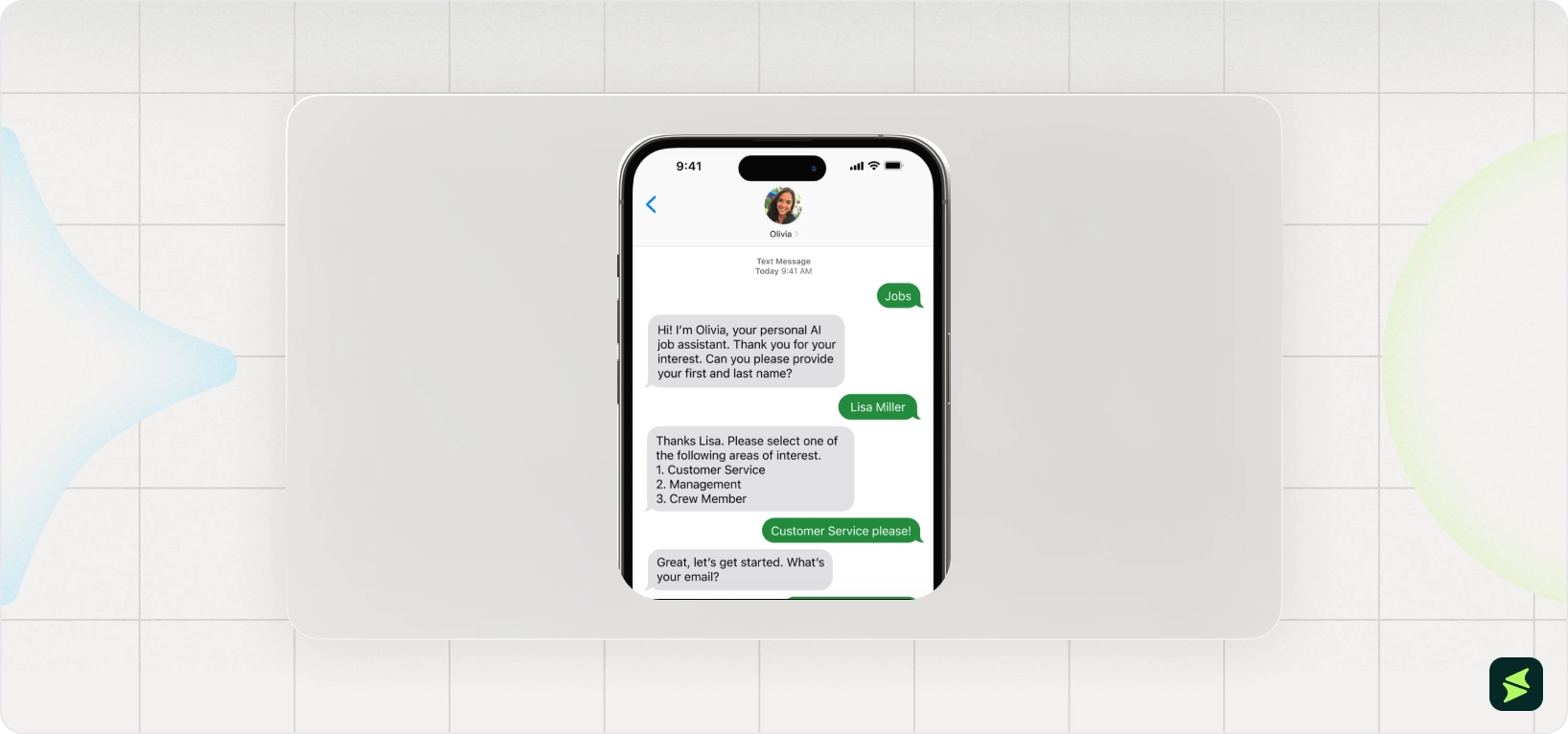

One of the most straightforward ways for companies to lose good applicants is to not communicate well with them. AI fills in those gaps directly with things like automated status updates and self-service interview scheduling software. Chatbots handle basic questions at any hour. And role suggestions also show up while interest is still high, instead of days later when attention has already moved on.

The image above of Paradox software shows exactly what a candidate sees on their phone; a text-based conversation that screens, schedules, and answers questions without any recruiter involvement. In many enterprise setups, this interface is powered by broader agentic workflows that handle orchestration behind the scenes. This is the interface used by Nestle and General Motors.

The results are measurable. CareerPlug's 2025 Candidate Experience Report found that 66% of candidates say a positive hiring experience directly influences their decision to accept an offer. On the flip side, 26% rejected an offer specifically because of poor communication during the process. These cases are the predictable cost of a hiring process that doesn't keep candidates informed.

The speed gap compounds the problem. GoodTime's 2026 Hiring Insights Report found that 60% of organizations saw time-to-hire increase in 2025, while only 1 in 9 managed to reduce it. The best candidates don't wait for slow processes. They accept the offer that arrived first.

AI-powered candidate experience tools address this at every touchpoint. They minimize the silence, remove friction, and ensure that every applicant gets a response and a reason to stay engaged, regardless of how many people applied that day.

What most teams miss is the second-order effect. Besides frustrating candidates, low, inconsistent communication also disproportionately filters out specific ones. For instance, hourly workers who can't follow up during business hours, or first-generation job seekers who interpret silence as rejection. When AI removes that friction uniformly, the composition of the candidate pipeline changes, as more candidates progress through the process instead of dropping out due to delays or communication gaps. Not because targeting changed, but the process stopped disqualifying people before a human ever saw their name.

Ethical And Regulatory AI Hiring Becomes Mandatory

Not long ago, AI compliance in hiring was a legal team footnote. In 2026, it's a board-level conversation. The regulatory environment has hardened fast with lawsuits, enforcement actions, and daily financial penalties now on the table. If your organization uses AI to screen, rank, or evaluate candidates, you're already operating in regulated territory.

NYC Local Law 144 requires any employer using Automated Employment Decision Tools (AEDT) in New York City to conduct annual independent bias audits, publish the results publicly, and notify candidates before AI evaluates them. Penalties run up to $1,500/violation/day, and a December 2025 audit by the New York State Comptroller found that most employers aren't even close to compliant.

The European Union AI Act classifies recruitment AI as high-risk, placing it in the same regulatory category as medical devices and critical infrastructure. Enforcement became active in August 2026. If your organization hires in Europe or uses tools built by EU-based vendors, this applies to you. At the federal level, the EEOC has established that employers are liable for algorithmic disparate impact under Title VII, even when the bias is unintentional, and the tool belongs to a third-party vendor.

The candidate trust problem amplifies the legal risk.

Greenhouse AI Hiring Report found a widening trust gap in AI-driven hiring. While 70% of hiring managers trust AI decisions, only 8% of job seekers consider AI-based hiring processes fair, and nearly half of candidates report declining trust in hiring overall.

That's not a fringe position; it's a majority of your candidate pool opting out before they even start. Transparency fixes this. Candidates who know how AI is being used in your process are much more comfortable with it. This means that disclosure is not only a legal requirement but also a competitive advantage.

What ethical AI hiring actually requires in practice:

- Annual bias audits conducted by an independent third party, not the vendor

- Notifying candidates prior to their evaluation by AI, rather than after

- Human review at every decision point that materially affects a candidate's outcome

- Documented decision trails, so you can explain every AI recommendation if challenged

- Vendor accountability contracts should explicitly include compliance guarantees and audit rights

The organizations that treat this as a compliance burden will be reactive, expensive, and exposed. The ones that treat it as a trust-building opportunity will be the ones candidates — and regulators — actually want to work with.

Programmatic Job Advertising Optimizes For Quality, Not Just Clicks

Most recruitment advertising still works the same way it did fifteen years ago. Write a job post, pick a few job boards, set up a budget, and hope the right people apply. Then measure success by how many clicks came in.

The problem is obvious once you say it out loud: clicks don't make hires. Qualified candidates do.

That's the shift programmatic recruiting software makes. Instead of placing ads and waiting, AI-powered programmatic platforms evaluate every channel in real time (job boards, social platforms, search engines, niche sites) and automatically redirect budget toward the sources actually producing qualified applicants.

Channels that generate clicks, but no hires, get defunded. Channels that convert get more spend. The whole system runs continuously, without a recruiter manually pulling levers.

More broadly, recruiting teams still spend a significant share of time on manual coordination tasks. GoodTime's 2026 Hiring Insights Report (independent study of 500+ U.S. TA leaders) found that recruiters spend 38% of their time on interview scheduling alone. This makes it the single biggest operational drain on hiring teams. That's before accounting for manual job posting, channel management, and budget reallocation across platforms. AI-powered programmatic advertising eliminates most of that manual overhead entirely, so recruiters can focus on candidates rather than campaigns.

The metrics driving these decisions have also changed. Forward-thinking TA teams have moved away from cost-per-click as the primary measure and toward a more complete funnel view.

Old Metric | What It Measures | New Metric | What It Actually Tells You |

Cost/click | Ad engagement | Cost/qualified candidate | Budget efficiency against hiring outcomes |

Total applicants | Volume | Qualified apply rate | Signal-to-noise ratio in your pipeline |

Impressions | Visibility | Time-to-fill by source | Which channels actually speed up hiring |

Job board spend | Input |

| True ROI on every advertising dollar |

The shift from volume-based to outcome-based advertising isn't a future trend. It's already separating the TA teams hitting their hiring goals from the ones constantly scrambling to explain why their pipeline is full of the wrong people.

AI is what makes that shift operationally possible.

AI Vendor Consolidation Reshaping The Entire Market

The HR technology market is consolidating fast. What’s emerging from this shift is a smaller set of platforms defining how modern hiring stacks are built. This is reflected in how organizations now evaluate the best AI recruitment software not as isolated tools, but as end-to-end systems that replace fragmented workflows. Enterprise buyers are reducing the number of tools they use and moving toward connected systems where AI works across shared data instead of isolated silos.

In August 2025, Workday HCM acquired Paradox — the conversational AI platform used by Medtronic, FedEx, and Unilever — completing the deal by October. This followed Workday's earlier acquisition of HiredScore, an AI talent orchestration tool. The outcome is a single platform combining AI-driven talent discovery, candidate engagement, interview scheduling, and recruiting workflows.

During the same period, SAP CRM software acquired SmartRecruiters, a high-volume recruiting and AI candidate engagement platform. Aptitude Research founder Madeline Laurano captured the moment simply, "This is a big year in HR tech."

These aren't isolated deals. They're a pattern. According to Drake Star's managing partner, interviewed by UNLEASH, the defining trend of HR tech in 2025 was "the shift from point solutions to platforms, and the resulting consolidation based on that movement." The message from the market was clear: enterprise buyers were consolidating their tech stacks, not adding to them. S&P Global's February 2026 HR tech analysis reinforced why; standalone tools that can't differentiate are losing ground as platform players absorb those capabilities into their core suite.

And there's a deeper reason this matters than just saving money on software licenses. AI runs on connected data. Feed it a fragmented stack — a sourcing tool here, an ATS there, analytics sitting somewhere else — and the insights it offers are only as good as the gaps between those systems.

Josh Bersin's HR Technology Market Report found that large companies now run at least 9 HR systems and spend an average of $310/employee/year on HR technology, with a 29% increase year-over-year. That fragmentation is expensive, and it's impacting the ROI on every AI investment sitting on top of it.

In 2026, the winning move isn't buying more AI tools — it's connecting the ones you already have. When data flows freely across sourcing, screening, scheduling, and analytics, AI finally has what it needs to do its job: faster decisions, better hires.

Here's something the AI adoption conversation keeps glossing over:

While companies race to automate, many are quietly cutting the entry-level roles that have built their leadership pipelines for decades. 43% of companies plan to replace roles with AI this year — back-office and entry-level positions first. The cost savings look compelling in the short term.

The consequences show up later, when there's nobody internally ready to step into management. This is because entry-level roles aren't just execution work; they're how people learn how an organization actually operates. Cut them too aggressively, and the talent pipeline above them eventually runs dry.

What makes this particularly worth paying attention to right now is the maturity gap underneath it. Phenom's 2026 Benchmarks Report found that 83% of organizations sit in the lowest two levels of AI maturity. Most aren't operating AI that's sophisticated enough to replace the human judgment they're removing. The sequencing is off, and organizations that plan carefully around this will have a structural advantage over those that don't.

That planning starts with being honest about what AI is actually good at, and what still belongs to people. In recruiting, that line is getting clearer. AI handles the transactional work — sourcing, screening, scheduling, status updates — with speed and consistency no human team can match. What it can’t do is read a candidate's hesitation, navigate a complicated hiring manager relationship, and make a judgment call that requires context the data doesn't capture.

That's exactly where the recruiter's role is heading. And it sits at the center of the debates that are going on around AI vs. human decision-making in hiring. The line between automation and judgment is no longer theoretical; it’s being drawn inside everyday recruiting workflows. 73% of TA leaders say critical thinking is their most needed skill in 2026, ranked above AI skills. LinkedIn has found that demand for relationship-building skills in recruiter job postings surged 54x in the past year. In turn, the recruiters pulling ahead are the ones using it to eliminate administrative noise, so they can focus on the work that actually requires a human in the room.

Most AI recruiting implementations fail for the same reason: the technology was ready before the organization was. Before adding more tools, get these six things right.

Org Readiness

AI in recruiting works best when there's a clear owner: someone accountable for how it's deployed, monitored, and improved. Without that, tools get purchased, used inconsistently, and eventually abandoned.

A 2025 Work Reimagined survey found that while roughly 9 in 10 employees employ AI platforms at work, only 28% of organizations translated those deployments into high-value outcomes. The gap between adoption and impact is also due to ownership and governance. Identify who owns AI in your TA function before the next purchase order is signed.

Data Readiness

The quality of AI depends on the data it uses. If your ATS has incomplete candidate profiles, inconsistent job codes, or years of unstructured historical data, your AI will surface unreliable recommendations. Before deploying any AI matching or analytics tool, audit your data quality first. The output of your AI will directly reflect the quality of what's underneath it.

Process Redesign

Layering AI on top of a broken process doesn't fix it; instead, it accelerates the breakdowns. The organizations seeing the strongest results from AI aren't automating their old workflows. They're redesigning them around what AI can now do. Map your current hiring funnel, identify where delays and drop-offs happen, and build AI into those points specifically.

Recruiter Upskilling

Critical thinking keeps coming up as the main skill for recruiters in 2026, not AI proficiency on its own. Most of the value sits in interpretation. Knowing when to trust an AI output, when not to. The important thing to invest in is teaching recruiters how to comprehend AI outputs, spot when suggestions are wrong, and make decisions that the data alone can't back up. That's a different kind of training than what most businesses are doing right now.

Governance And Policy

Without a documented AI policy, things tend to drift. Each recruiter ends up improvising their own approach. Some lean heavily on AI suggestions. Others barely use them at all. Both extremes create inconsistency. A clear internal policy helps set boundaries, where AI can support the process, where human judgment takes over, and how candidates are informed along the way. In New York, NYC Local Law 144 already enforces parts of this for employers, and similar rules are starting to show up in other regions as well.

Measuring ROI

HR leaders may sometimes witness no ROI from their AI initiatives despite strong adoption. The pattern is consistent: recruiting software gets deployed, but the organization doesn't set baselines. Define your starting point before deployment: current cost-per-hire, time-to-fill, quality-of-hire by source. Then measure the same metrics after.

The opportunity in AI recruiting is real, but so is the gap between buying tools and actually using them well. The organizations pulling ahead in 2026 aren't the ones with the most sophisticated stack. They're the ones that were deliberate: clear on where AI helps, honest about where humans still need to lead, and disciplined enough to measure what's actually working.

AI handles the noise; people make calls that matter. This division of labor, when done well, is what better hiring looks like. If you're evaluating where to start (or where to go next), explore our AI recruiting software category to see which platforms are built for the outcomes that actually matter in 2026.